IBM Research

The Power of Thinking Big: IBM Research’s “5 in 5”

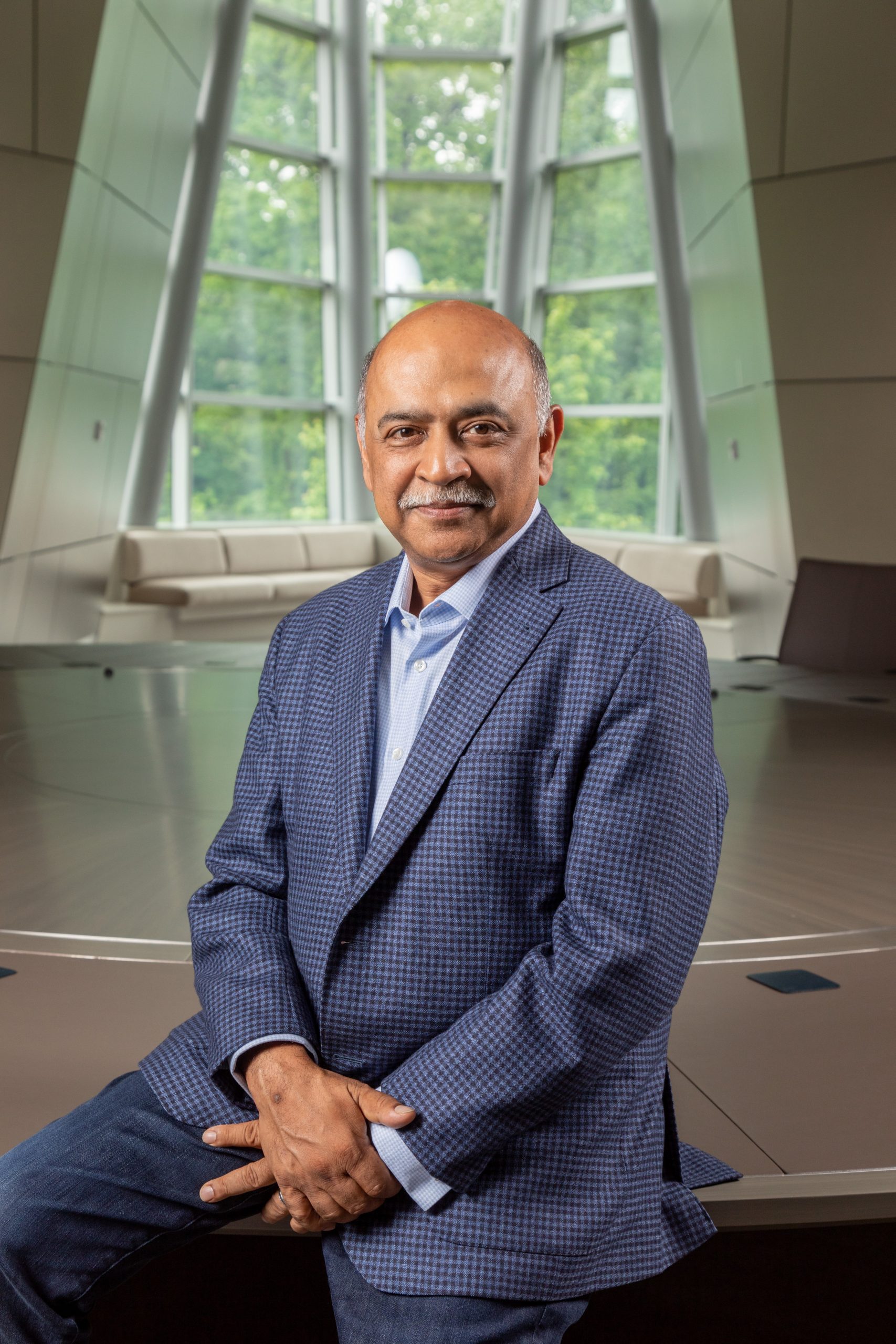

January 5, 2017 | Written by: Arvind Krishna

Categorized: IBM Research

Share this post:

Great scientific leaps rarely happen incrementally. They come from setting big, ambitious goals that move discovery forward. Think of the Wright brothers’ determination to fly, President John F. Kennedy’s pledge to put a man on the moon, or IBM Research’s resolve to build a computer that could understand spoken language and beat the greatest human champions on Jeopardy!

The bigger the goal, the greater the impact on society. This approach has been a hallmark of IBM Research since our founding more than 70 years ago, and it is responsible for many world-changing inventions. In that spirit, today we’re renewing our annual “5 in 5” predictions about five technologies that we believe have the potential to change the way people work, live, and interact during the next five years.

IBM Research began the 5 in 5 conversation a decade ago as a way to stimulate interest and discussion around some of the most exciting breakthroughs coming out of our labs. Then, as now, this effort was informed by the breadth of IBM’s unmatched expertise across systems, software, services, semiconductors and a wide swath of industries.

Forecasting is always a tricky business, but over the past ten years many of our 5 in 5 predictions have proven highly accurate in spotting and accelerating emerging technologies. For example:

In 2012, IBM Research predicted that computers would not only be able to look at pictures, but understand them. The field of computer vision has advanced rapidly over the last several years, to the point where our scientists have designed systems with a computational form of sight that can examine images of skin lesions and help dermatologists identify cancerous states.

That same year, we projected that computers will “hear” what matters. Significant advances are being made in creating cognitive systems that can interpret and analyze sounds to create a holistic picture of our surroundings. Very recently, in collaboration with Rice University, IBM announced a sensor platform that can “see”, “listen”, and “talk” and aids senior citizen for staying healthy, mobile, and independent.

Also in 2012, we envisioned digital taste buds that could help people to eat smarter. IBM researchers turned to the culinary arts to see if Watson, the world’s first cognitive computing system, could help cooks discover and create original recipes with the help of flavor compound algorithms. They trained Watson with thousands of recipes and learning about food pairing theories. Two years later, Chef Watson debuted at SXSW, followed by a web application for home cooks.

In 2013, our scientists anticipated that doctors will routinely use your DNA to keep you well. Full DNA sequencing is on its way to becoming a routine procedure. The following year, New York Genome Center and IBM started a collaboration to analyze genetic data with Watson to accelerate the race to personalized, life-saving treatment for brain cancer patients. In 2015, IBM announced another collaboration with more than a dozen leading cancer institutes to accelerate the ability of clinicians to identify and personalize treatment options for their patients.

For this year’s 5 in 5, we’re struck by the powerful implications of the ongoing effort to make the invisible world visible, from the macroscopic level down to the nanoscale. Innovation in this area could enable us to dramatically improve farming, enhance energy efficiency, spot harmful pollution before it’s too late, and prevent premature cognitive decline.

Our global team of scientists and researchers is steadily bringing this capacity from the realm of science fiction to the real world. You can read a detailed summary of the remarkable innovations we believe will result from this work over the next five years here. Below is a quick overview of our 5 in 5 predictions:

With AI, our words will be a window into our mental health. In five years, what we say and write will be indicators of our mental health and physical wellbeing. Patterns in our speech and writing analyzed by cognitive systems will enable doctors and patients to predict and track early-stage developmental disorders, mental illness and degenerative neurological diseases more effectively.

With AI, our words will be a window into our mental health. In five years, what we say and write will be indicators of our mental health and physical wellbeing. Patterns in our speech and writing analyzed by cognitive systems will enable doctors and patients to predict and track early-stage developmental disorders, mental illness and degenerative neurological diseases more effectively.

Hyperimaging and AI will give us superhero vision. In five years, our ability to “see” beyond visible light will reveal new insights to help us understand the world around us. This technology will be widely available throughout our daily lives, giving us the ability to perceive or see through objects and opaque environmental conditions anytime, anywhere.

Macroscopes will help us understand Earth’s complexity in infinite detail. The physical world before our eyes only gives us a small view into what’s an infinitely interconnected and complex world. Instrumenting and collecting masses of data from every physical object, big and small, and bringing it together will reveal comprehensive solutions for our food, water and energy needs.

Macroscopes will help us understand Earth’s complexity in infinite detail. The physical world before our eyes only gives us a small view into what’s an infinitely interconnected and complex world. Instrumenting and collecting masses of data from every physical object, big and small, and bringing it together will reveal comprehensive solutions for our food, water and energy needs.

Medical “labs on a chip” will serve as health detectives for tracing disease at the nanoscale. New techniques that detect tiny bioparticles found in bodily fluids will reveal clues that, when combined with data from the Internet of Things, will give a full picture of our health and diagnose diseases before we experience any symptoms.

Smart sensors will detect environmental pollution at the speed of light. Environmental pollutants won’t be able to hide thanks to new sensing technologies that utilize silicon photonics to accurately pinpoint and monitor the quality of our environment. Together with physical analytics combined with artificial intelligence, these technologies will unlock insights to help us prevent pollution and fully harness the promise of cleaner fuels like natural gas.

The combination of data explosion and exponential computation growth over the past fifty years has opened up whole new challenges for us to work on and solve together – challenges we never could have tackled or even foreseen in the past. This “digital disruption” is transforming the world around us.

As our latest 5 in 5 predictions show, there is no challenge too big – or too small – for us to set our sights on if we’re only bold enough to take the chance.

________________________

For more images, visit the IBM Research flickr page.

Chairman & Chief Executive Officer, IBM

Meet the Newest IBM Fellows

Since the first class of IBM Fellows in 1962, IBM has honored its top scientists, engineers and programmers, who are chosen for this distinction by the CEO. Among the best and brightest of IBM’s global workforce are 12 new IBM Fellows who join 293 of their peers who have been so recognized over the last […]

How IBM is Advancing AI Once Again & Why it Matters to Your Business

There have been several seminal moments in the recent history of AI. In the mid-1990s, IBM created the Deep Blue system that played and beat world chess champion, Garry Kasparov in a live tournament. In 2011, we unveiled Watson, a natural language question and answering system, and put it on the hit television quiz show, […]

Amplifying the Power of Debate with AI

French essayist, Joseph Joubert, wrote in 1896: “It is better to debate a question without settling it than to settle a question without debating it.” For the last 15 years, I’ve led the nation’s most popular debate organization, ProCon.org. While our public charity has served more than 180 million people since 2004, our obsession with […]